Why Structured Credibility Scoring Changes Everything for UAP Research

The UAP field has a credibility problem. Not because the data is weak — but because nobody agrees on how to measure it.

Every congressional hearing, every FOIA release, every whistleblower statement gets filtered through the same broken lens: gut instinct, tribal loyalty, and algorithmic noise. The result is a discourse where David Grusch and a random anonymous poster on Reddit occupy the same epistemic plane.

That ends now.

The Problem With "Trust Me, Bro" Intelligence

For decades, UAP research has operated on an informal credibility system. A source is "good" if the right people vouch for them. A document is "real" if it looks official enough. A claim is "credible" if it confirms existing priors.

This is not intelligence analysis. This is fan culture.

The Intelligence Community uses structured analytical techniques — ACH (Analysis of Competing Hypotheses), source reliability matrices, confidence intervals. The UAP disclosure community uses none of them. Until now.

How QDD's Multi-Vector Credibility Audit Works

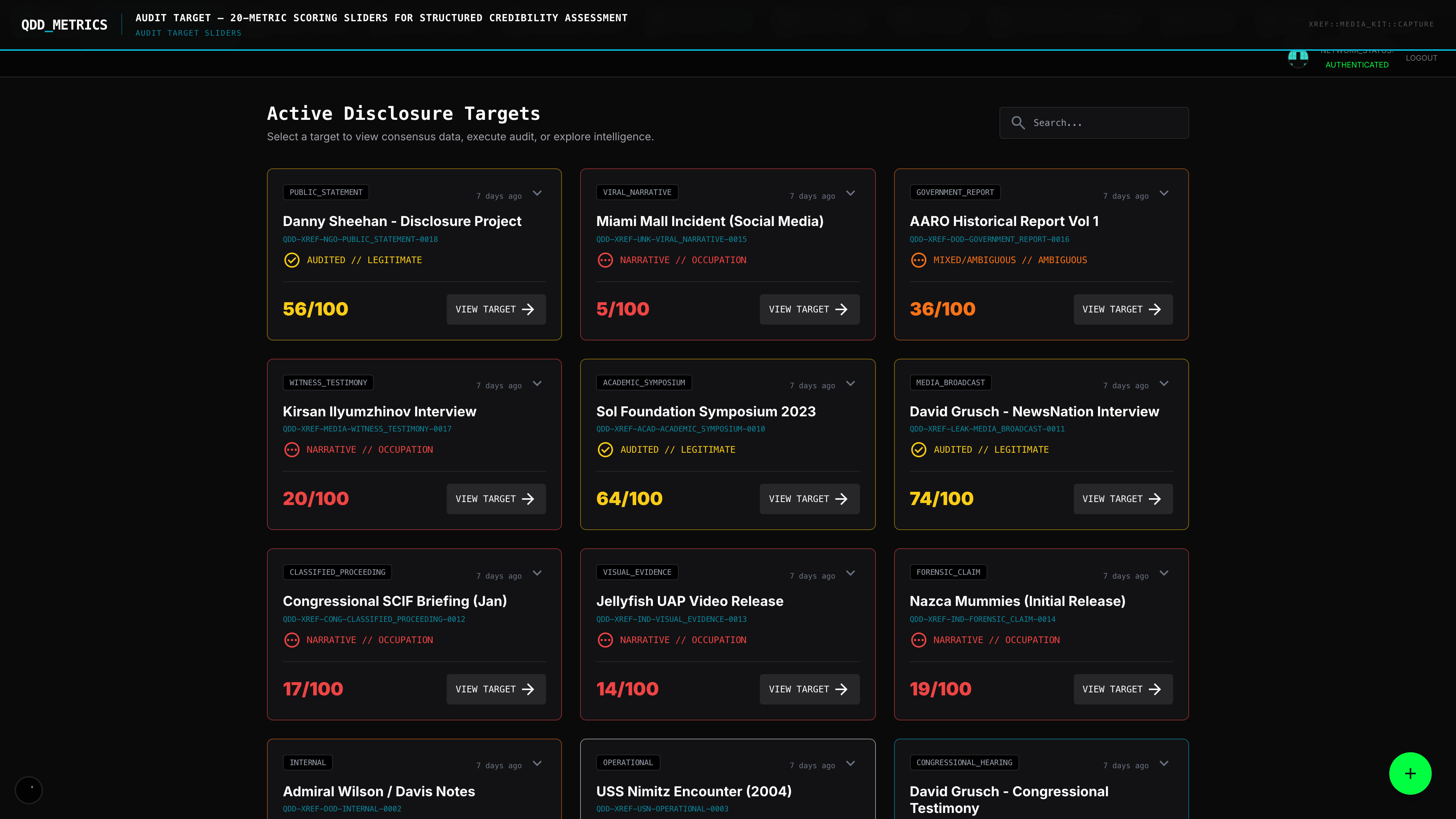

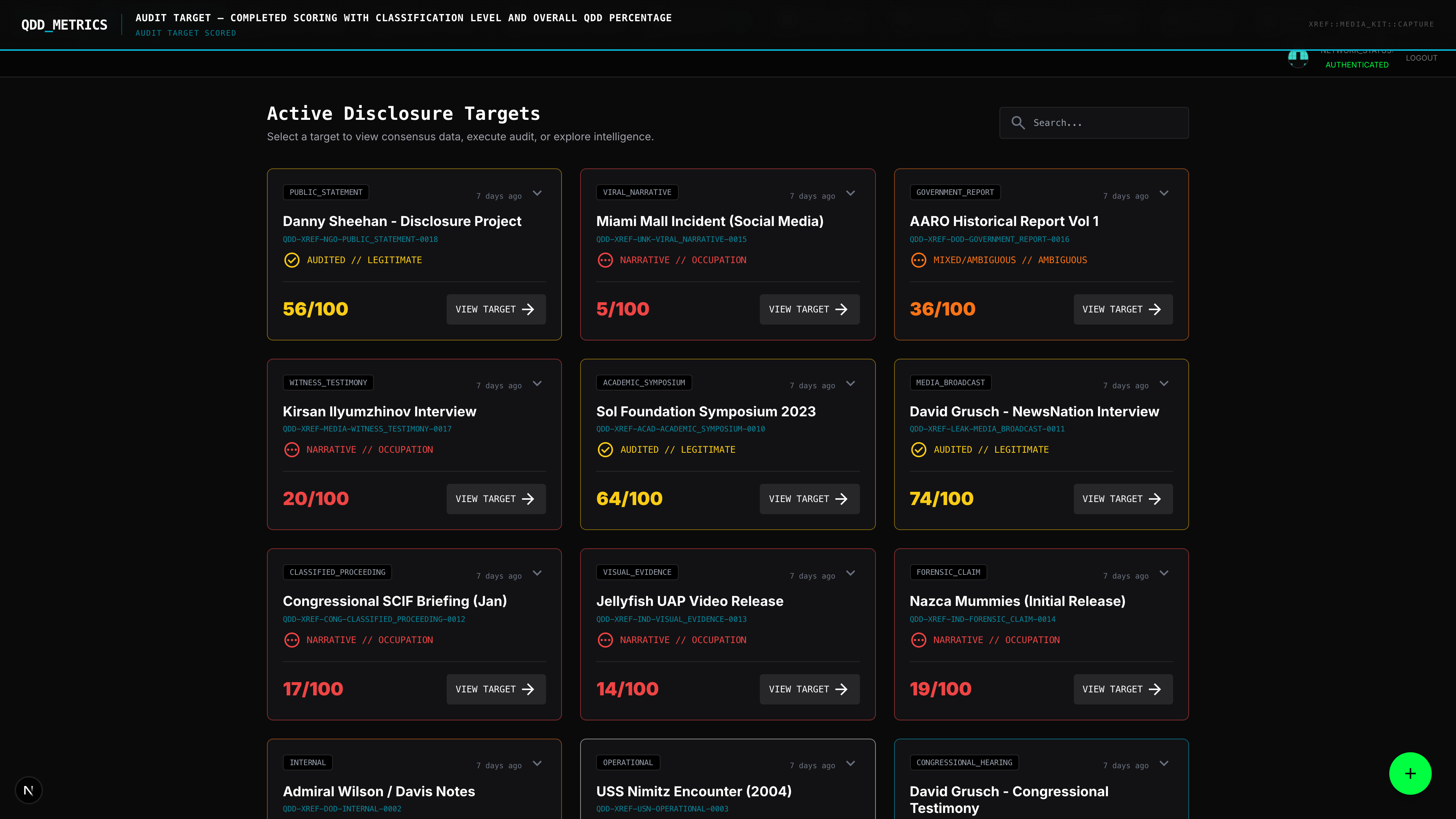

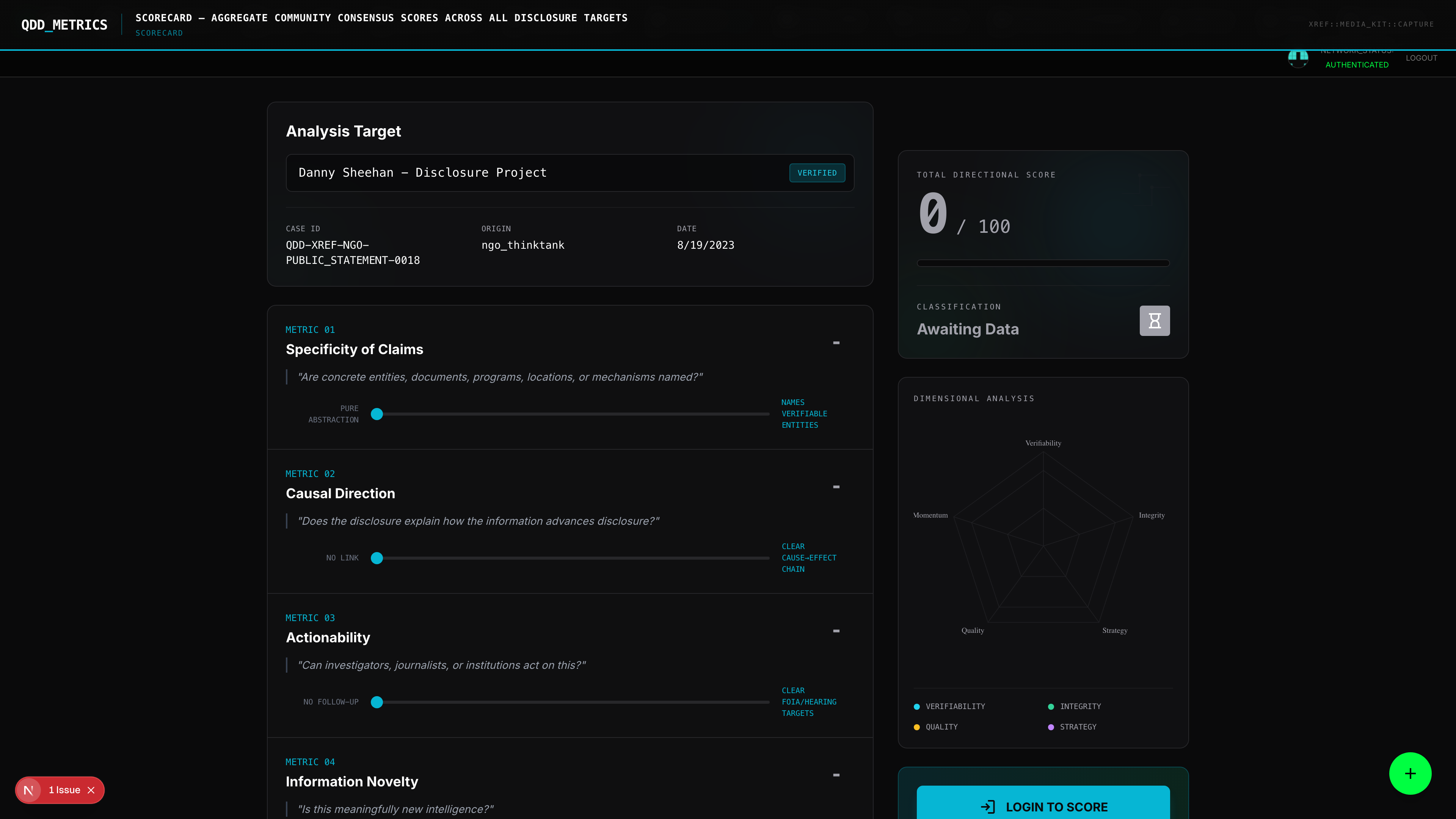

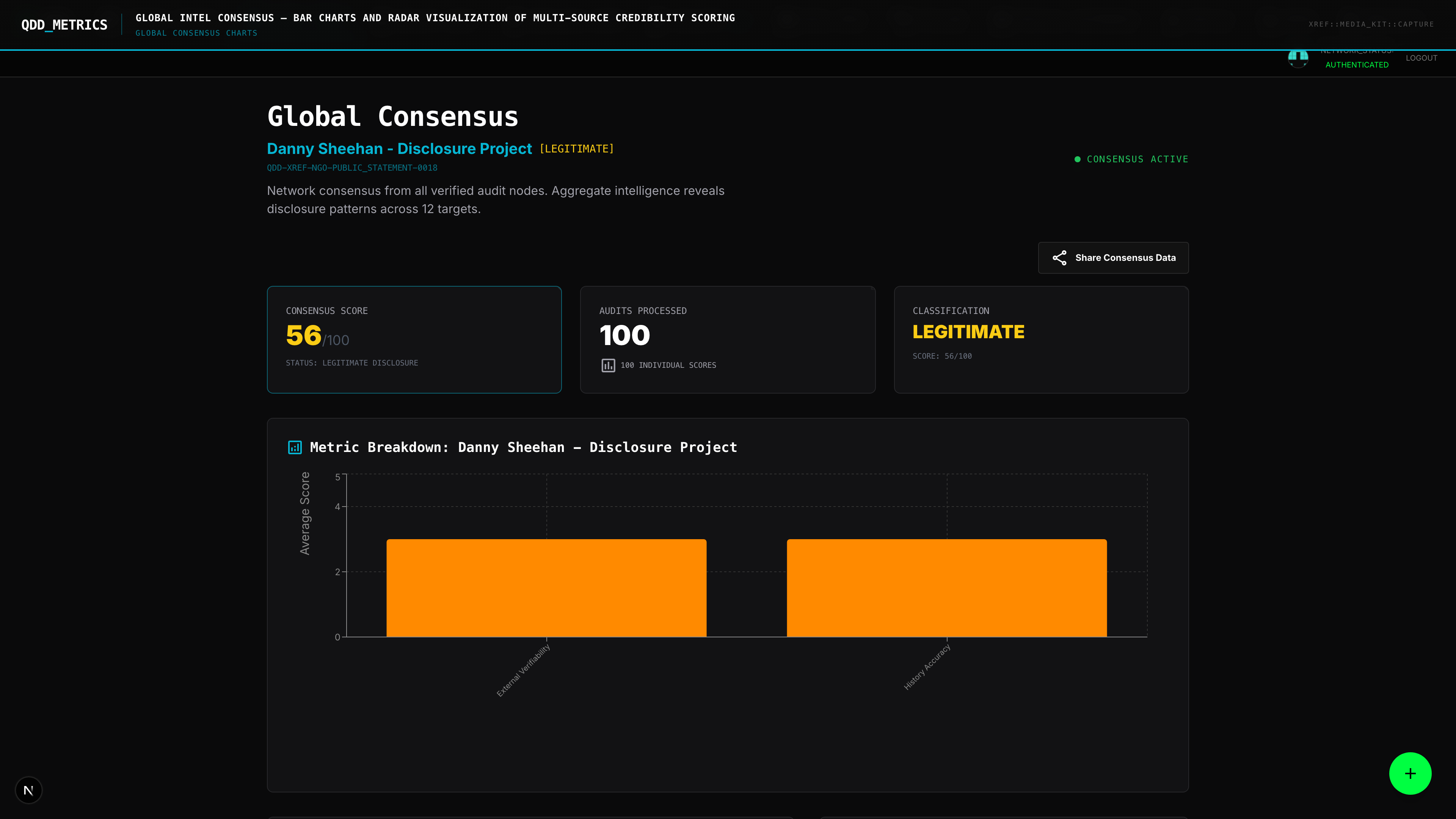

QDD's Quantified Disclosure Dashboard introduces a structured credibility scoring system built on measurable vectors, not vibes.

Every entity in the system — whether it's a person, organization, document, or event — gets scored across multiple dimensions:

Source Reliability. How many independently verifiable claims has this source made? What is their track record? AARO's historical accuracy gets the same scrutiny as a whistleblower's.

Corroboration Depth. Does other evidence support the claim? A statement from David Grusch carries different weight when corroborated by Inspector General findings versus standing alone.

Document Provenance. For FOIA releases and leaked documents, QDD tracks chain of custody, classification markers, and institutional origin. The 2017 New York Times UAP story sourced from AATIP scores differently than an unsourced PDF on a forum.

Temporal Consistency. Do claims hold up over time? Predictions that resolve correctly boost the credibility of their source. Predictions that fail reduce it. The system has memory.

Why This Matters for Disclosure

The UAP Disclosure Act of 2023 — championed by Senate Majority Leader Chuck Schumer and Senator Mike Rounds — was gutted in committee. One reason: opponents argued the evidence base was too weak to justify the legislation.

They were wrong. But the disclosure community couldn't prove it — because there was no structured, auditable framework to point to.

QDD changes that calculus.

When every claim, source, and prediction is scored and tracked in the open, the conversation shifts from "who do you believe?" to "what does the data show?" That's a harder argument to dismiss in a SCIF or a committee hearing.

The Open Audit Advantage

Every score in QDD is transparent. Every input is visible. Every methodology is documented and open-source on GitHub.

This is the opposite of how the IC operates — and that's the point. The classification system has been weaponized to suppress UAP data for decades. An open-source credibility framework is a direct counter to that information asymmetry.

What Comes Next

The credibility scoring system is live in QDD's public beta. It currently covers key figures in the disclosure ecosystem — from Grusch to AARO Director Dr. Sean Kirkpatrick to congressional allies like Representatives Tim Burchett and Anna Paulina Luna.

As the knowledge graph grows, so does the scoring resolution. More entities. More connections. More signal.

The days of credibility by consensus are over. The audit is open. The data is live.